Loan Approval(Logistic Regression)

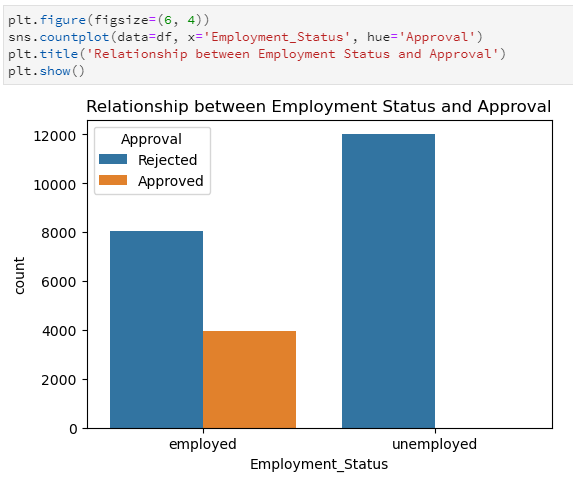

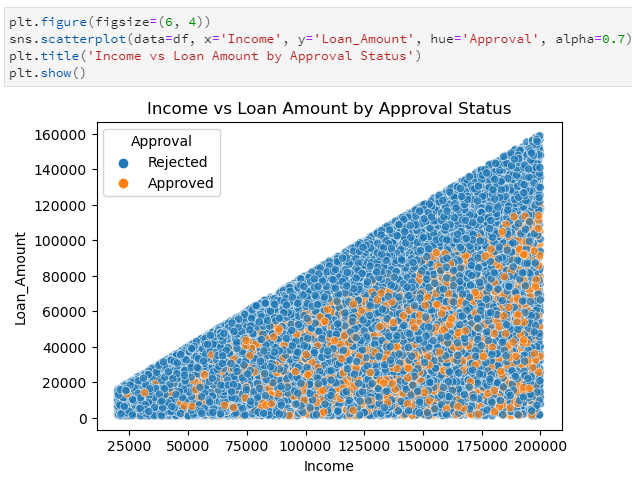

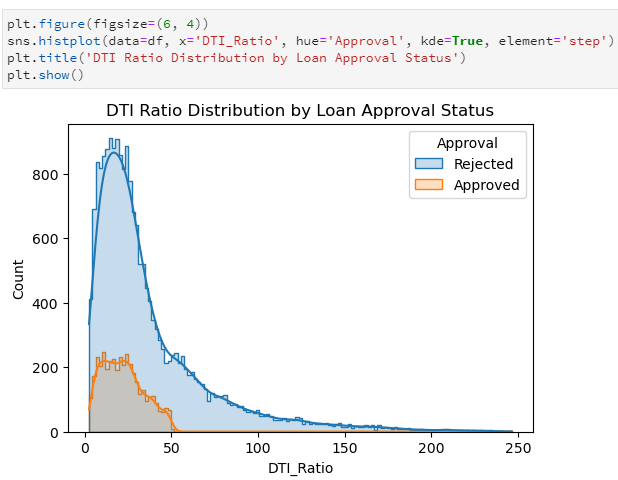

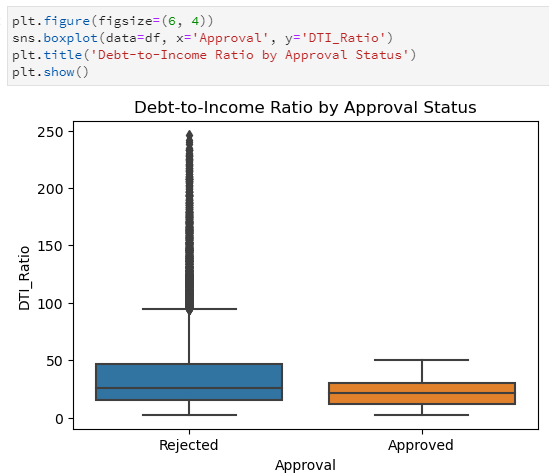

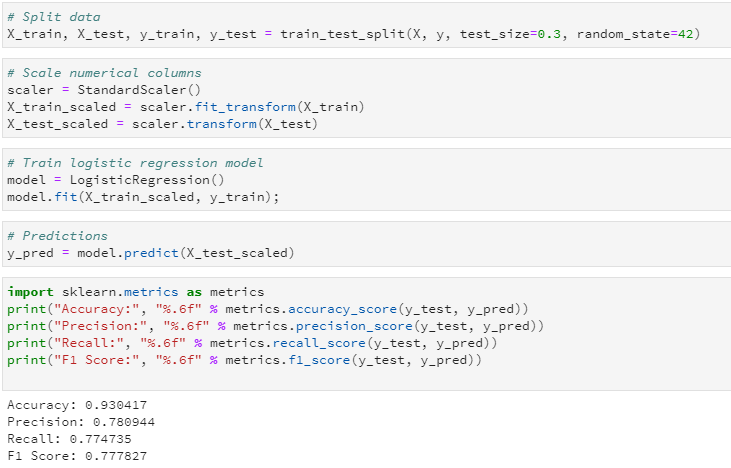

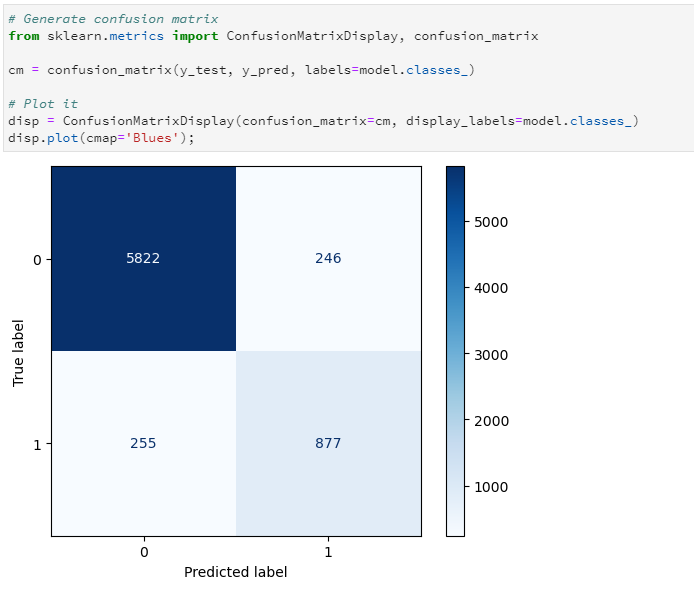

In this project, we explore predictive analytics in the context of loan approvals. Our goal is to understand the factors influencing approval decisions and assess how accurately we can predict them. We begin by examining the dataset and uncovering patterns through targeted visualizations. These insights then guide us into building a logistic regression model, allowing us to quantify relationships and evaluate the model’s predictive power.

The data has been picked from kaggle: https://www.kaggle.com/datasets/abhishekmishra08/loan-approval-datasets?resource=download&select=loan_data.csv

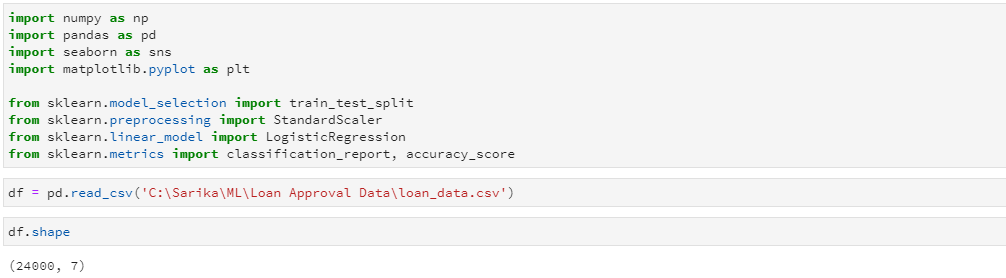

Let's import the libraries and read the source file. There are 24,000 rows in the file.

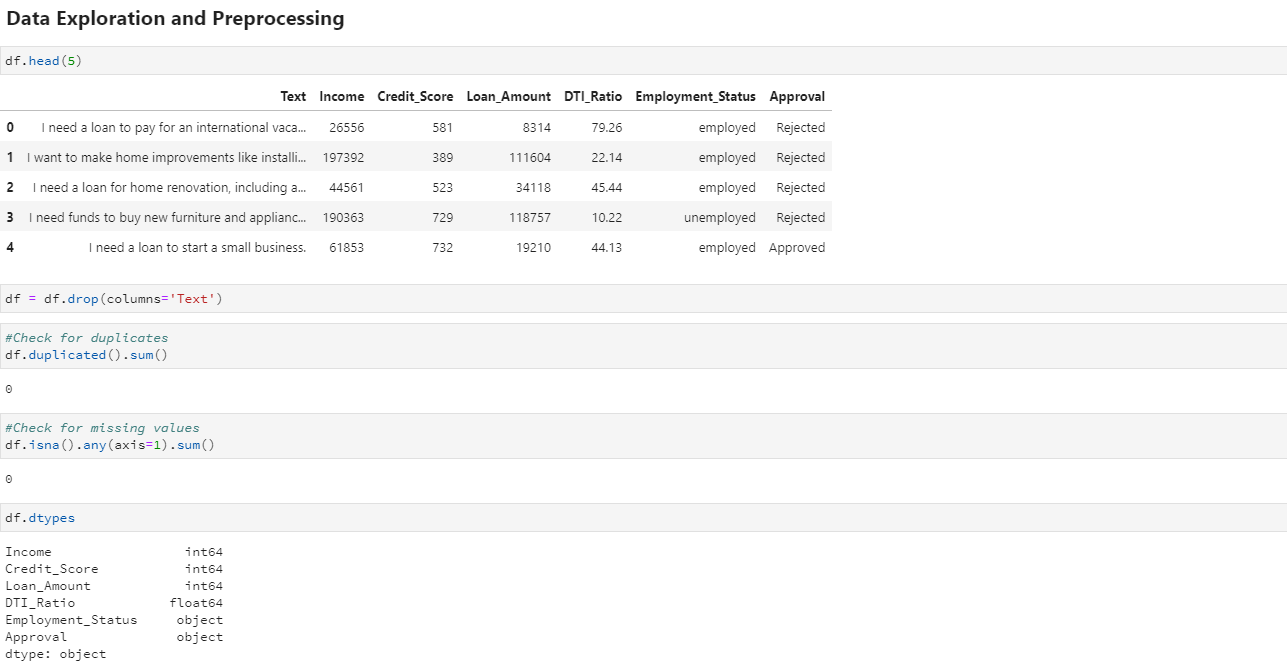

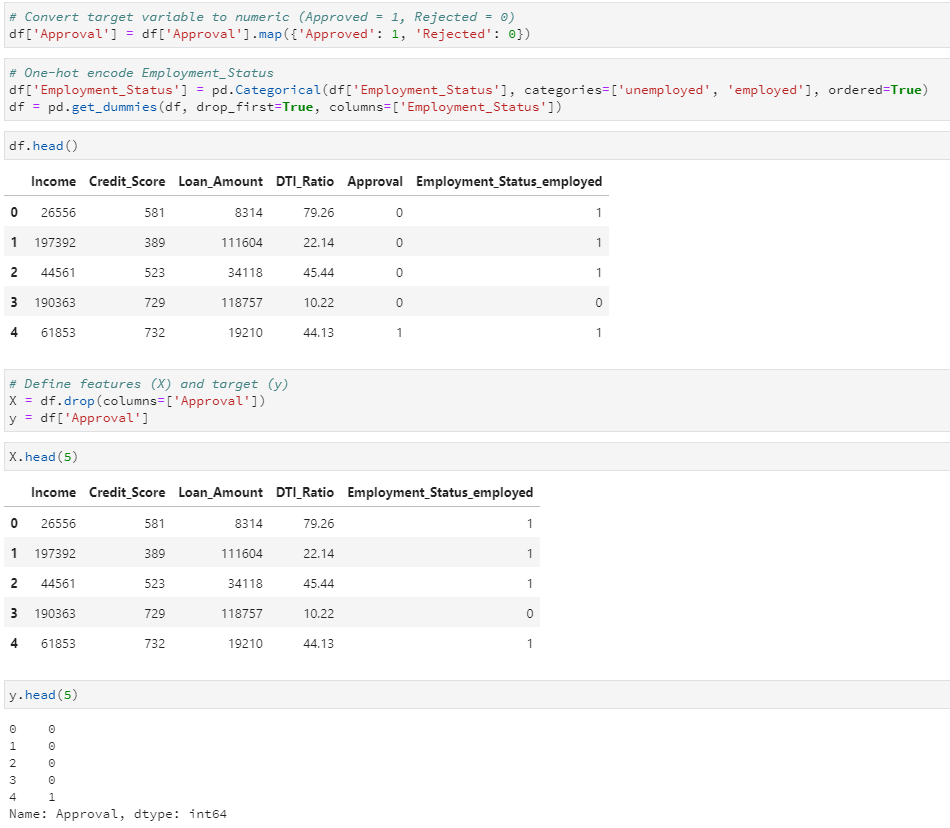

Now, let's explore the dataset and perform preprocessing if needed. We'll check for and remove any duplicate rows or missing values to ensure data quality before moving into analysis.